A dashboard lms is supposed to help training leaders, LMS administrators, instructors, and people managers make faster, better decisions. In practice, most dashboards do the opposite: they create noise, bury priorities, and turn learning data into passive reporting.

That failure is expensive. When decision-makers cannot quickly spot overdue compliance, at-risk learners, weak course performance, or team readiness gaps, training becomes reactive. Interventions happen late. Managers lose trust in the numbers. And executives start questioning whether the LMS is delivering measurable business value at all.

If you are responsible for learning operations, enablement, compliance, or workforce development, the goal is not to build a prettier dashboard. The goal is to build a decision system that tells each user what matters, why it matters, and what should happen next.

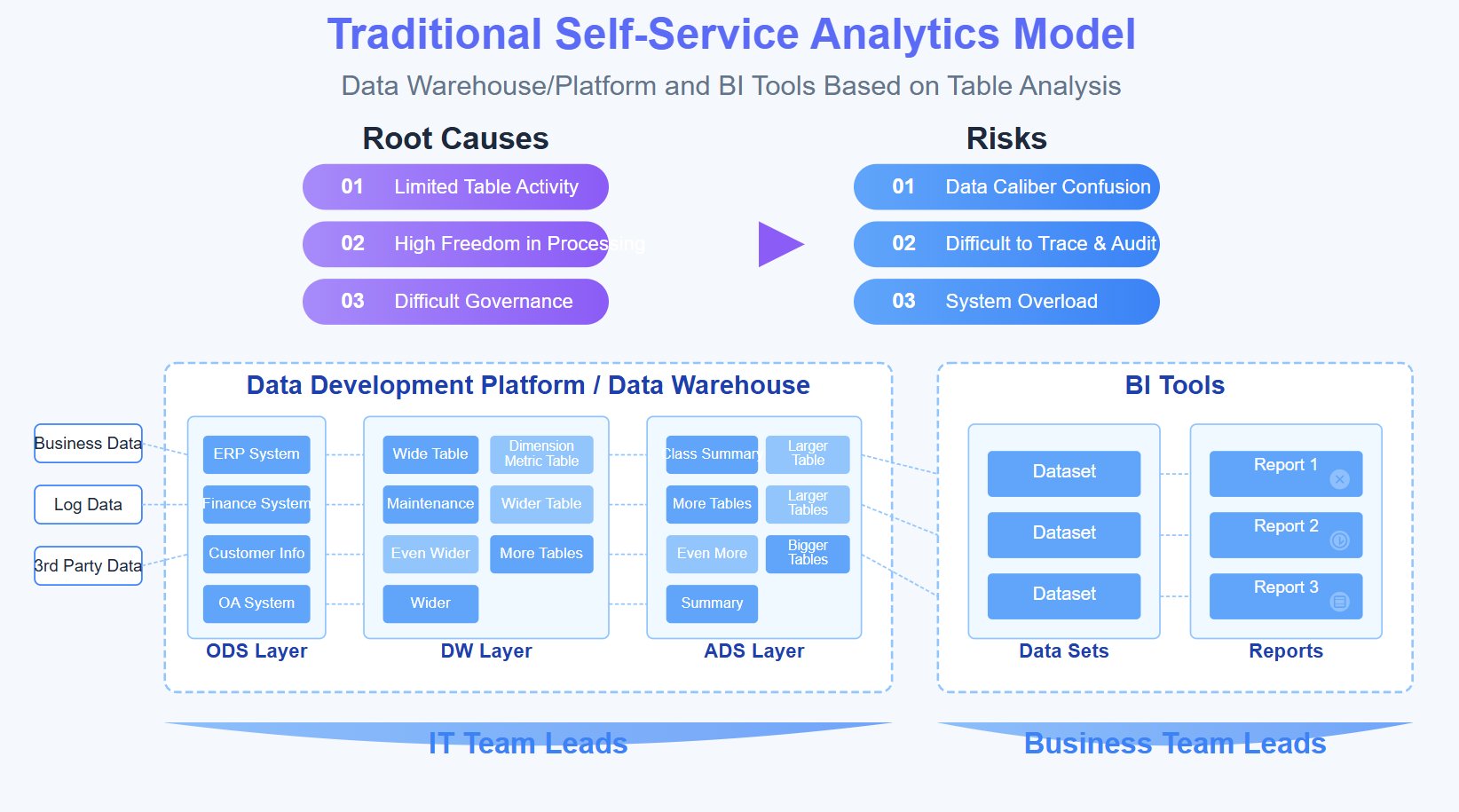

Most dashboard failures are not technical failures. They are design and governance failures.

Many LMS dashboards show logins, clicks, page views, and attendance events as if more data automatically means more clarity. It does not.

Activity data is only useful when it answers an operational question such as:

A dashboard lms that only shows volume metrics creates visibility without action.

A common pattern is metric overload: completion rates, enrollments, quiz averages, total sessions, time spent, badges earned, and dozens of secondary stats all on one screen.

The problem is not that these metrics are wrong. The problem is that they are often disconnected from the outcomes leaders actually care about, such as:

If a metric does not support a real decision, it does not belong in the primary view.

Many LMS dashboards are built from the perspective of the system owner. That leads to admin-heavy interfaces full of configuration summaries, system counts, and broad reports.

But the people who need to act are usually different:

When one dashboard tries to serve everyone equally, it serves no one well.

A reporting layer tells users what happened. A decision system helps them prioritize what to do next.

That distinction is the difference between a dashboard that gets opened once a month and one that becomes part of daily management routines.

An effective dashboard lms should reduce ambiguity. It should help each role make a small set of recurring decisions with confidence and speed.

This is one of the highest-value use cases. Your dashboard should quickly identify:

The key is timeliness. If the dashboard only surfaces issues after the deadline has passed, it is not decision support.

Good learning teams do not just monitor learner behavior. They also evaluate content and delivery quality.

Your dashboard should reveal:

This shifts the conversation from “Are people completing training?” to “Is the training working?”

Enterprise stakeholders need evidence that learning contributes to operational goals.

A decision-ready dashboard lms should connect learning to outcomes such as:

That does not require perfect attribution. It requires disciplined metric selection and consistent definitions.

The best dashboards do not stop at diagnosis. They point toward action.

Examples include:

Static reporting is useful for audits, monthly reviews, and executive summaries. It helps document the past.

Examples include:

These are useful, but they do not necessarily improve day-to-day decisions.

Decision support focuses on urgency, prioritization, and action.

Examples include:

That is the standard your dashboard lms should aim for.

Role-based design is not optional. It is the foundation of an effective LMS dashboard strategy.

Admins need a broad view, but not a cluttered one. Their dashboard should answer:

Instructors need to act on classroom and course signals quickly. Their dashboard should answer:

Managers care less about platform activity and more about workforce readiness. Their dashboard should answer:

Learners need clarity, not analytics overload. Their dashboard should answer:

A strong dashboard lms starts with operating decisions, not visual design. Build backward from the actions you want people to take.

Before selecting charts or KPIs, define the high-value decisions by role.

For example:

If a dashboard element does not support one of these decisions, remove it from the primary screen.

Balanced dashboards use both early-warning signals and outcome measures.

This combination helps teams intervene before failure becomes visible in monthly reporting.

Many LMS dashboards fail because users compare numbers built on inconsistent logic.

Standardize:

Without this foundation, the dashboard creates argument instead of alignment.

This is the most practical test for every KPI on your dashboard.

If a metric changes, what action should follow?

If there is no obvious action, the metric likely belongs in a secondary report, not the dashboard homepage.

The right KPI set is compact, role-specific, and operationally meaningful.

These are foundational because they combine output and early-warning visibility.

Together, they help answer:

Raw activity counts can be misleading. More clicks do not equal better learning.

Focus on engagement patterns that reveal obstacles, such as:

The point is not to prove learners are active. The point is to identify where progress breaks down.

These metrics matter because they connect directly to operational risk.

For many enterprises, the most valuable dashboard lms is the one that helps answer:

Action-oriented design turns analytics into management behavior.

Executives and managers should not need to inspect every chart manually.

Use threshold-based logic such as:

Exceptions are often more valuable than averages.

A good dashboard starts broad and moves smoothly to specifics.

For example:

This drill-down flow reduces the need for separate exports and side reports.

Do not make users interpret every anomaly from scratch.

Examples of recommended actions:

This is where a dashboard lms becomes a practical operating tool.

One of the fastest ways to improve adoption is to stop forcing every role into the same interface.

Each role should have a distinct landing view, KPI set, and recommended actions.

A practical structure looks like this:

This is the discipline most teams skip.

For example, a learner cannot act on organization-wide adoption trends. An executive does not need granular session-level click behavior. A manager should not need to parse admin configuration summaries.

Keep the interface narrow enough to be useful.

You do not need to reinvent the dashboard from scratch. Strong models already exist across education, corporate learning, and cloud LMS platforms. The key is to borrow patterns, not copy layouts blindly.

Instructor and teacher dashboard models often work well because they are built around immediate action.

Useful patterns include:

These models work because they prioritize intervention over presentation.

In enterprise settings, the most valuable dashboards usually focus on risk and operational readiness.

Typical strengths include:

This is especially important in regulated industries, distributed workforces, and frontline training environments.

Many organizations use LMS plugins, extensions, or configurable cloud tools. These can be effective if they avoid over-customization.

The best implementations usually provide:

Too much configurability often leads to dashboard sprawl. Governance matters more than feature count.

The best teacher-style dashboards make learner risk visible in seconds.

Borrow patterns like:

These visual cues accelerate action.

Instructors should not have to open three separate tools to understand learner health.

Combine:

That creates context for smarter follow-up.

Strong dashboard design is often less about the visuals themselves and more about restraint.

Borrow these principles:

A dashboard lms should feel immediately interpretable.

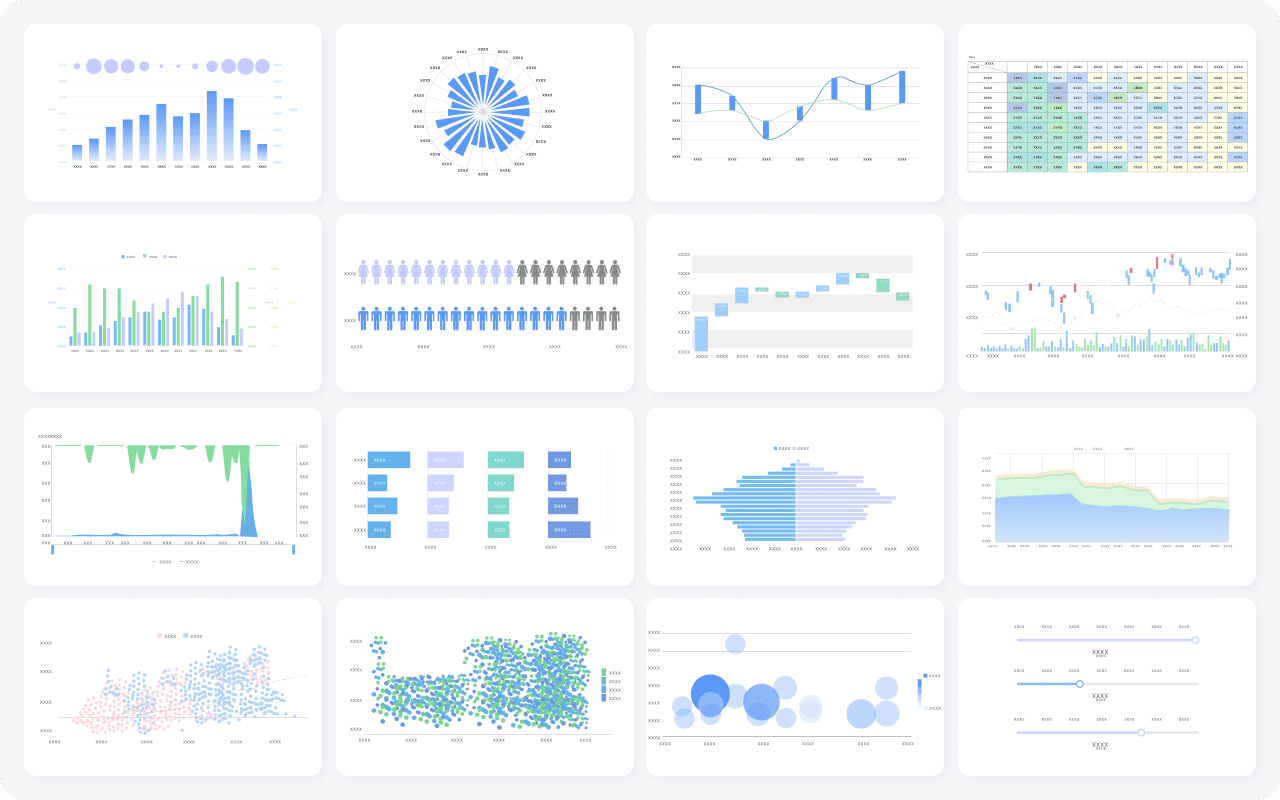

Some of the most effective dashboard components are also the simplest:

This mix supports both executive scanning and operational action.

Even technically polished dashboards fail if the rollout strategy is weak.

A dashboard design that works for a school, coaching business, or course marketplace may fail in enterprise compliance or workforce readiness contexts.

Always adapt the structure to your own use cases:

Use external examples as inspiration, not templates.

Every chart should have a clear owner.

If no one is responsible for acting on a metric, it should not dominate the dashboard homepage.

A useful rule: if a chart has no owner, no threshold, and no follow-up process, demote it to a secondary report.

Nothing destroys adoption faster than unclear metric logic.

Users need to know:

Transparent definitions create confidence and reduce rework.

Many teams treat launch as the finish line. It is not.

The real test begins after users rely on the dashboard in live workflows. If managers still export spreadsheets, if instructors still maintain shadow trackers, or if executives still request side reports, the dashboard is not yet solving the core problem.

A disciplined rollout improves both adoption and dashboard quality.

Start with a focused scenario, such as:

This reduces complexity and makes feedback actionable.

Measure behavior change, not just dashboard views.

Ask:

That is how you validate decision impact.

Expect multiple iterations.

Typical refinements include:

The best dashboard lms implementations evolve through operational use.

A dashboard is successful when it changes behavior in measurable ways.

If your dashboard works, teams should spot risk earlier and act sooner.

Look for:

Interventions should become more precise, not just more frequent.

That means:

Your content team should be able to identify exactly where course design is failing.

Signs of progress include:

At the enterprise level, this is where trust is won.

You should be able to show clearer relationships between training and outcomes such as:

A dashboard lms should be governed continuously, not left untouched after launch.

Metrics that do not drive action should be removed, reframed, or moved to secondary reporting.

This is one of the strongest indicators of a design gap. If users still rely on spreadsheets or custom exports, the dashboard is not fully aligned to their decisions.

This is the ultimate success test.

Good dashboards improve:

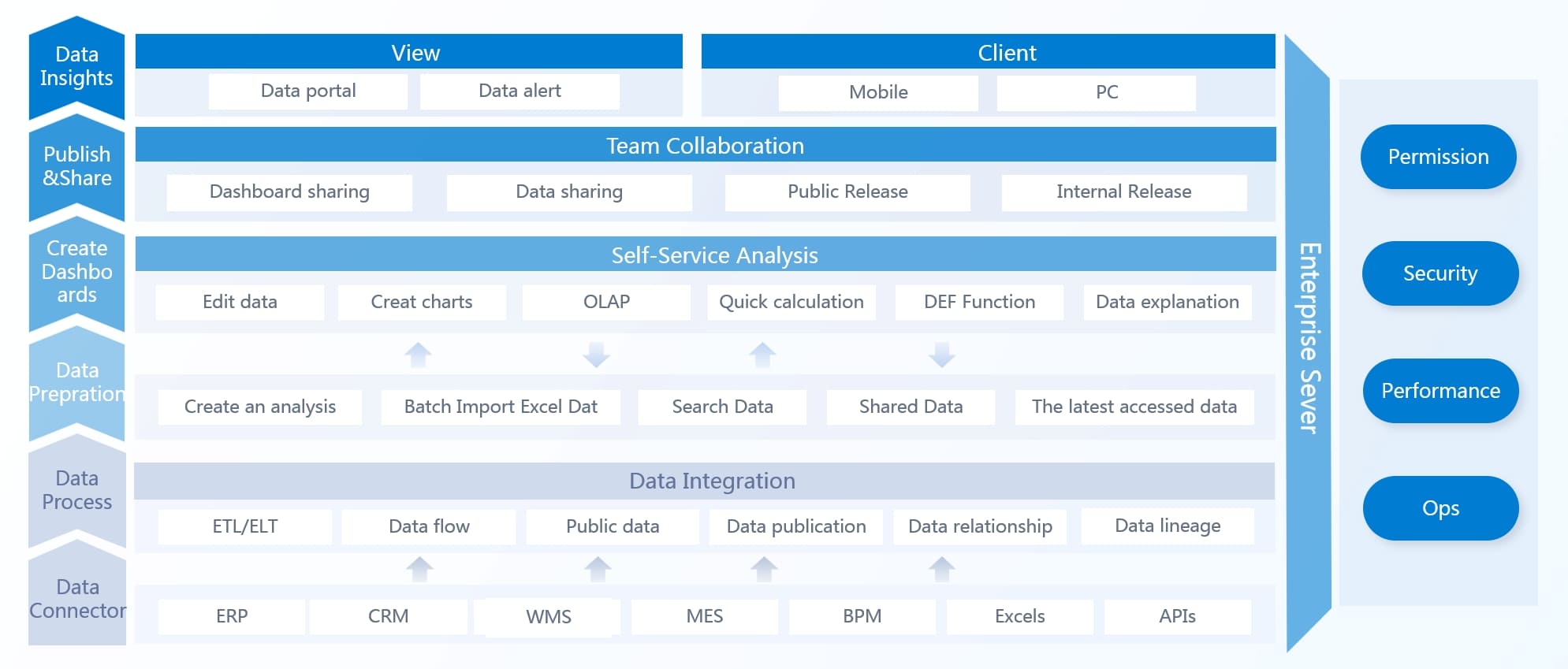

Building a truly effective dashboard lms manually is harder than most organizations expect. You need reliable LMS data pipelines, consistent KPI definitions, role-based access logic, alerting rules, drill-down workflows, visual hierarchy standards, and a governance model that keeps the whole system trustworthy over time.

That is why many LMS dashboard projects stall between reporting and real decision support.

A better path is to use FineBI to accelerate the work. Instead of stitching together fragmented reports and custom views from scratch, you can use ready-made templates, build role-based dashboards faster, and automate the workflow from data integration to decision-ready visualization.

With FineBI, teams can:

The strategic advantage is simple: building this manually is complex; use FineBI to utilize ready-made templates and automate this entire workflow.

If your current LMS dashboard is generating more reports than decisions, that is your signal to redesign the system around action. Start with the decisions, narrow the KPIs, build role-specific views, and use FineBI to operationalize the model at scale.

A useful LMS dashboard helps each role see priorities, risks, and the next best action instead of just showing raw activity data. It should make overdue training, learner risk, and content issues obvious at a glance.

Most fail because they track too many disconnected metrics, serve everyone with the same view, and focus on reporting instead of action. The result is data visibility without clear decisions.

Prioritize metrics tied to real outcomes like compliance by deadline, speed to proficiency, certification risk, course drop-off, and team readiness. If a metric does not support a decision, it should not dominate the main dashboard.

A standard report explains what happened in the past, while a decision dashboard highlights what needs attention now. The best dashboards also suggest what managers, instructors, or admins should do next.

Learners, managers, instructors, administrators, and executives should each see different dashboard views based on their responsibilities. Role-based dashboards reduce noise and make it easier for each user to act quickly.

The Author

Yida YIn

FanRuan Industry Solutions Expert

Related Articles

Why Every Business Needs a Customer Dashboard: 9 Revenue, Retention, and Efficiency Benefits

A $1 is no longer a nice to have reporting layer. It is the operating view that helps revenue leaders, customer success teams, support managers, and executives make faster decisions from the same customer data. When acco

Yida Yin

Jun 03, 2026

How to Build a Portfolio Management Reporting Dashboard for Executive Decision-Making

A strong portfolio management reporting dashboard helps executives make faster, better decisions about where to invest, what to stop, which risks to address, and how to reallocate constrained resources. For PMO leaders,

Yida Yin

Jun 07, 2026

How to Build a Customer Experience Dashboard by Team: KPIs for Executives, Support, Product, and Marketing

A $1 should help different teams act on the same customer reality without forcing everyone to work from the same screen. Executives need a high level view of loyalty, churn risk, and revenue impact. Support leaders need

Yida Yin

Jun 02, 2026