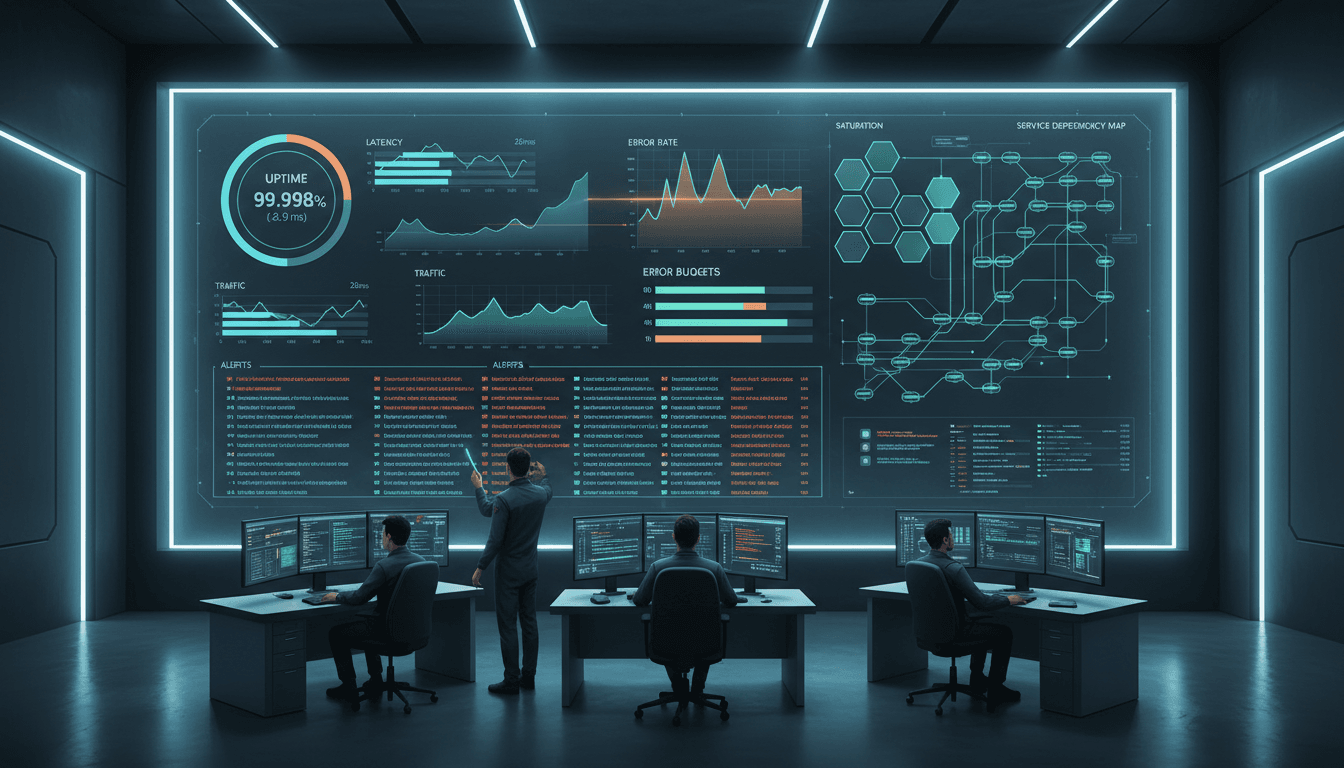

An effective sre dashboard is more than a screen full of charts. It is a decision tool that helps teams understand system health, respond faster during incidents, and improve reliability over time. When built well, it gives on-call engineers the signals they need in seconds, while also helping service owners and leadership track risk, performance, and reliability commitments.

In this guide, you will learn what an SRE dashboard is, how to build one for real-time monitoring, which seven metrics matter most, and what practical dashboard patterns work in the real world.

An sre dashboard is a prebuilt view of the most important reliability and performance signals for a service or platform. In plain language, it is the place your team goes to answer questions like:

Site Reliability Engineering focuses on keeping services dependable while balancing speed, change, and risk. A dashboard supports that goal by turning raw telemetry into something people can quickly understand and act on. Instead of forcing engineers to search through dozens of tools during a problem, a strong dashboard brings the most useful metrics together in one place.

Not all dashboards serve the same purpose. In practice, teams usually need different views for different jobs:

That distinction matters. A dashboard for an on-call responder should not look the same as a dashboard for engineering leadership. One needs rapid triage. The other needs a clear view of reliability posture, incident load, and error budget risk.

A good sre dashboard also helps teams shift from reactive monitoring to proactive action. Instead of noticing problems only after users complain, teams can spot rising latency, growing saturation, abnormal error patterns, or rapid error budget burn before the issue becomes a full outage. That is the real value: not more data, but earlier and better decisions.

The fastest way to create a bad dashboard is to start with charts instead of users. A useful sre dashboard begins with the people who will rely on it and the questions they need answered.

Different audiences need different levels of detail:

If one dashboard tries to satisfy everyone equally, it usually satisfies no one. A better approach is to create a focused operational dashboard for responders, then build supporting views for management, planning, and service reviews.

Before you add a single panel, define the questions the dashboard must answer during both normal operations and incidents. For example:

These questions prevent the dashboard from becoming a visual dump of every metric you can collect. If a chart does not help answer an operational question, it probably does not belong on the main dashboard.

A real-time monitoring dashboard depends on timely and trustworthy data. Most teams pull from a mix of:

Refresh intervals should match the use case. Critical operational views often need near-real-time updates, while trend or planning panels can refresh less frequently. It also helps to link directly from charts to related alerts, logs, traces, and runbooks so that the dashboard becomes the first step in action, not the last step in observation.

A strong sre dashboard should be readable in a few seconds.

Put the most important user-facing health signals at the top. Place supporting technical and dependency information below. Keep visual patterns consistent so engineers do not have to relearn the interface during every incident.

A practical top-to-bottom layout often looks like this:

This structure helps teams move from “something is wrong” to “what is affected” to “where should we look next” without switching context.

The exact composition of an sre dashboard will vary by system, but some metrics are nearly universal. These seven categories provide a solid foundation for real-time monitoring and reliability management.

Availability tells you whether the service is working from the user’s perspective. This is one of the first things responders and stakeholders want to know during an issue.

Track availability against reliability targets, not just raw uptime percentages. A service showing 99.95% uptime may still be burning error budget too quickly if failures are concentrated during peak usage. Include customer-impact views where possible, such as successful request rate, regional availability, or endpoint-specific health.

Good availability panels often include:

This keeps the dashboard focused on actual service health rather than internal system assumptions.

Latency is one of the clearest indicators of user experience. Even when a service is technically “up,” it may still be failing users if responses are too slow.

For an sre dashboard, percentile-based views are more useful than averages. Averages can hide serious issues, while percentiles like p50, p95, and p99 reveal how bad the worst user experiences are becoming.

Useful latency views include:

During incidents, rising latency often shows up before total failure. That makes it a key early-warning signal.

Error rate helps teams detect failures quickly and understand whether an issue is spreading. This metric should separate meaningful failures from harmless noise whenever possible.

For example, a burst of retries or client disconnects may not mean the same thing as increasing 5xx server responses. Segment errors by type and severity so responders can distinguish between a brief anomaly and a true incident.

A practical error section might show:

A dashboard that highlights error type and context is far more useful than one that simply turns red when anything fails.

Traffic tells you about demand. Saturation tells you how hard the system is working. Capacity helps you understand how close you are to your limits. These signals are strongest when viewed together.

Traffic alone may show a spike, but that may not matter if the system still has headroom. CPU alone may show high utilization, but that may not matter if user-facing performance is stable. The real insight comes from combining demand, resource pressure, and trend data.

Track indicators such as:

This group of metrics supports both incident response and capacity planning. It also helps teams catch overload conditions before they become outages.

Service level indicators, or SLIs, measure what users actually experience. Error budgets translate reliability targets into a practical operating model. Together, they make an sre dashboard much more valuable because they connect technical telemetry to service commitments.

A dashboard should clearly show:

This helps teams make better decisions about deployments, feature launches, and operational risk. If the error budget is burning quickly, that should be visible immediately. If the service is stable and the budget is healthy, teams can move faster with more confidence.

A dashboard should not only monitor the system. It should also help teams monitor the quality of their operational process.

If alert volume is too high, responders get fatigued. If alerts are slow or unclear, incidents drag on longer than necessary. Tracking alert and incident quality helps improve the reliability program itself.

Useful measures include:

This section is especially useful for weekly reviews and operational improvement. It shows whether the monitoring system is helping engineers or overwhelming them.

Modern services rarely fail alone. Databases, message brokers, third-party APIs, caches, internal services, and network layers can all contribute to an incident. That is why a mature sre dashboard should make dependencies visible.

Dependency views help teams answer:

Helpful panels may include:

This visibility is critical in distributed systems, where symptoms often appear far from the true source of the problem.

Many dashboards look impressive but are rarely opened when they matter most. Teams use dashboards consistently when they are focused, predictable, and directly helpful during daily operations and incidents.

A useful sre dashboard should mirror how people think during troubleshooting. Engineers usually start with a symptom, then move toward affected functionality, then toward a likely cause.

A good structure groups related signals by:

This makes it easier to move from “checkout latency is rising” to “payment API errors increased” to “the database connection pool is saturated” without opening five unrelated screens.

During an incident, nobody wants to decode chart labels, wonder what a color means, or compare mismatched time windows.

Design for quick scanning by using:

If your dashboard needs a long explanation before someone can use it, it is too complex for incident response.

A dashboard should lead directly to the next step. Every important panel should answer not just “what happened” but also “what do I do next?”

Make the dashboard actionable with links to:

This is one of the biggest differences between a dashboard teams admire and a dashboard teams actually depend on.

Dashboards decay over time. Services change. Dependencies move. Old panels stay behind long after they stop being useful.

Review your sre dashboard on a regular schedule and ask:

Retire dead panels, update labels, add context where people got stuck, and validate the dashboard in real incident reviews. The best dashboards evolve along with the service.

There is no single perfect sre dashboard design. The right structure depends on system complexity, team maturity, and operational goals. Still, a few common patterns show up repeatedly in strong implementations.

This is the minimum viable dashboard for a service team or on-call engineer. It is optimized for daily health checks and fast incident response.

A typical layout includes:

This kind of dashboard works well for a standalone API, web service, or internal platform component. It stays focused on the most important operational questions and avoids distractions.

Larger organizations often need a broader dashboard view that combines multiple services and audiences. This is not a replacement for service-level dashboards, but a summary layer above them.

It may include:

This pattern is useful for incident command, operations reviews, and leadership visibility. The key is to keep it high level and drill-down friendly, rather than trying to show every underlying metric on one page.

For microservices or event-driven architectures, teams often need a dependency-aware dashboard that highlights interactions across services.

Typical elements include:

This pattern is powerful during cascading failures because it helps responders understand blast radius and propagation paths. It is especially useful when one user-facing symptom may have multiple possible upstream causes.

Even experienced teams make predictable dashboard mistakes. Avoid these common problems:

A great sre dashboard is not the one with the most data. It is the one that helps the team make the next correct decision fastest.

Before you roll out your first sre dashboard, use this checklist to make sure it is useful in practice and not just visually complete.

A strong start does not require a huge dashboard. It requires a useful one. Begin with the signals that matter most to users and responders, keep the layout simple, and improve it after every incident review. Over time, your sre dashboard can become one of the most valuable tools in your reliability practice: not because it displays everything, but because it makes the right things obvious.

An effective SRE dashboard should show the most important reliability signals first, usually availability, latency, traffic, error rate, saturation, SLO status, and active incidents. It should also include enough context to help teams move quickly from detection to investigation.

An SRE dashboard is built for operational decisions, not just visibility. It focuses on service health, user impact, and reliability risk so responders can act faster during incidents.

The most important metrics usually align with the golden signals: latency, traffic, errors, and saturation. Many teams also add availability, error budget burn, and dependency health to make the dashboard more useful in real time.

Put high-level service status and user-facing metrics at the top, then add supporting panels for capacity, SLOs, alerts, dependencies, and recent deployments below. This layout helps on-call engineers understand what is broken, how serious it is, and where to investigate next.

Critical operational dashboards should refresh near real time so teams can respond to changes quickly. Trend and planning views can update less often because they are used for analysis rather than live incident response.

The Author

Lewis Chou

Senior Data Analyst at FanRuan

Related Articles

How to Build a Portfolio Management Reporting Dashboard for Executive Decision-Making

A strong portfolio management reporting dashboard helps executives make faster, better decisions about where to invest, what to stop, which risks to address, and how to reallocate constrained resources. For PMO leaders,

Yida Yin

Jan 01, 1970

How to Build a Customer Experience Dashboard by Team: KPIs for Executives, Support, Product, and Marketing

A $1 should help different teams act on the same customer reality without forcing everyone to work from the same screen. Executives need a high level view of loyalty, churn risk, and revenue impact. Support leaders need

Yida Yin

Jun 02, 2026

Construction Dashboard Guide: Connect Schedule, Cost, and Field Progress Without Spreadsheet Chaos

A $1 is not just a reporting screen. It is the operating layer that helps project managers, operations leaders, and executives connect three things that usually live in separate files: schedule, cost, and field progress.

Yida Yin

May 29, 2026