An engineering metrics dashboard gives enterprise teams one place to see whether software delivery is getting faster, quality is holding up, and developer workflows are improving or slowing down. For engineering leaders, platform teams, and delivery managers, the real value is not more reporting. It is better operational decisions.

In most enterprises, engineering data is fragmented across Git platforms, CI/CD tools, issue trackers, incident systems, and internal spreadsheets. That creates a familiar set of problems: leadership lacks a reliable delivery view, managers argue over metric definitions, teams get measured on noisy proxies, and improvement work stalls because nobody trusts the numbers. A well-designed dashboard fixes that by turning scattered activity into decision-ready signals.

The goal is simple: show trends, bottlenecks, and outcomes that help teams improve delivery predictability, reliability, and developer efficiency without turning measurement into surveillance.

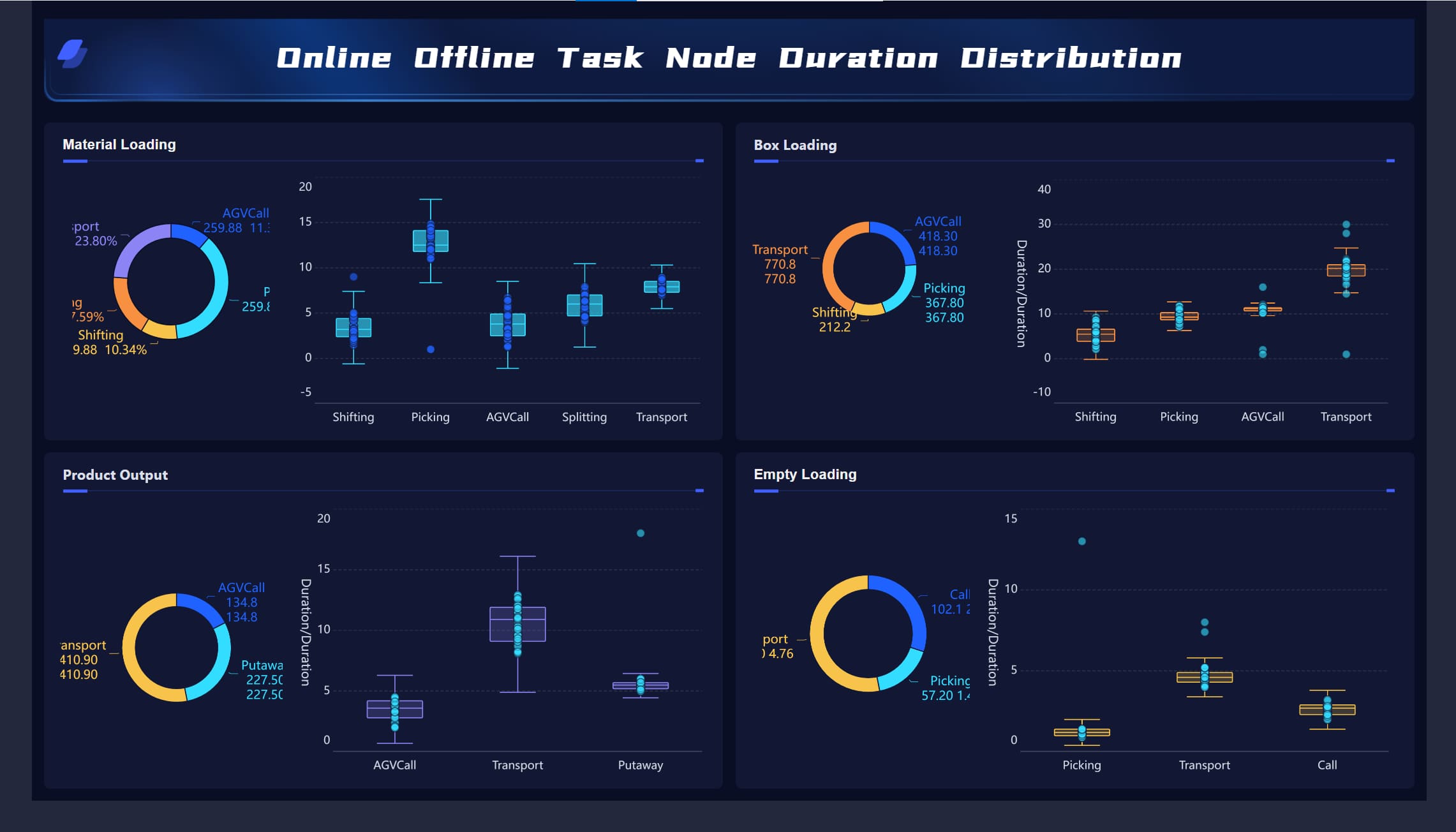

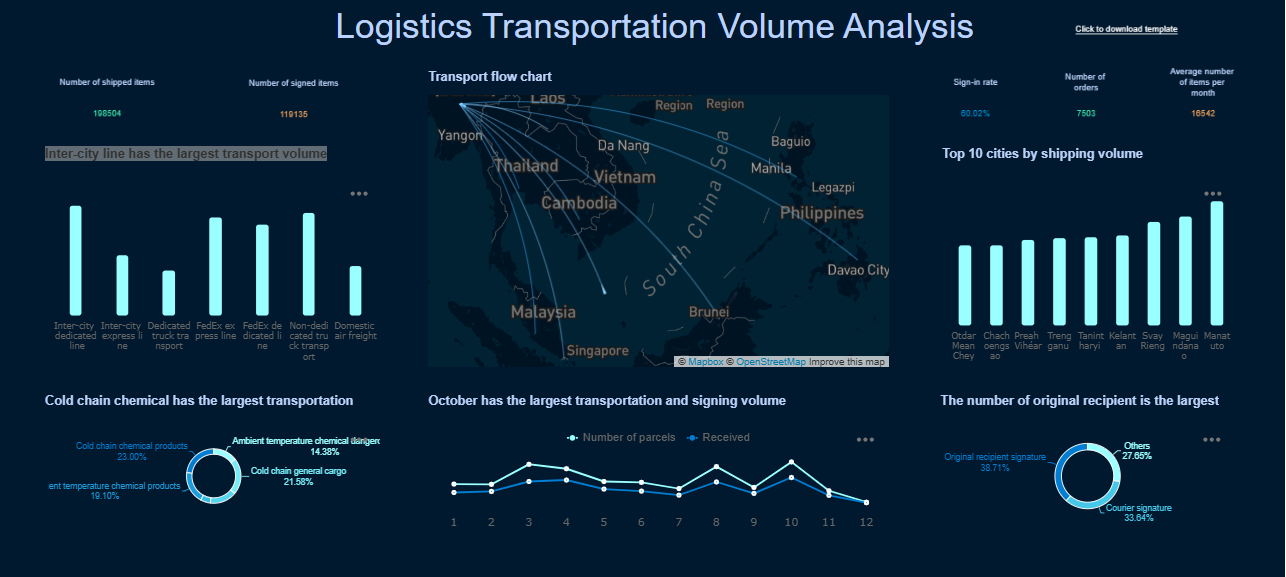

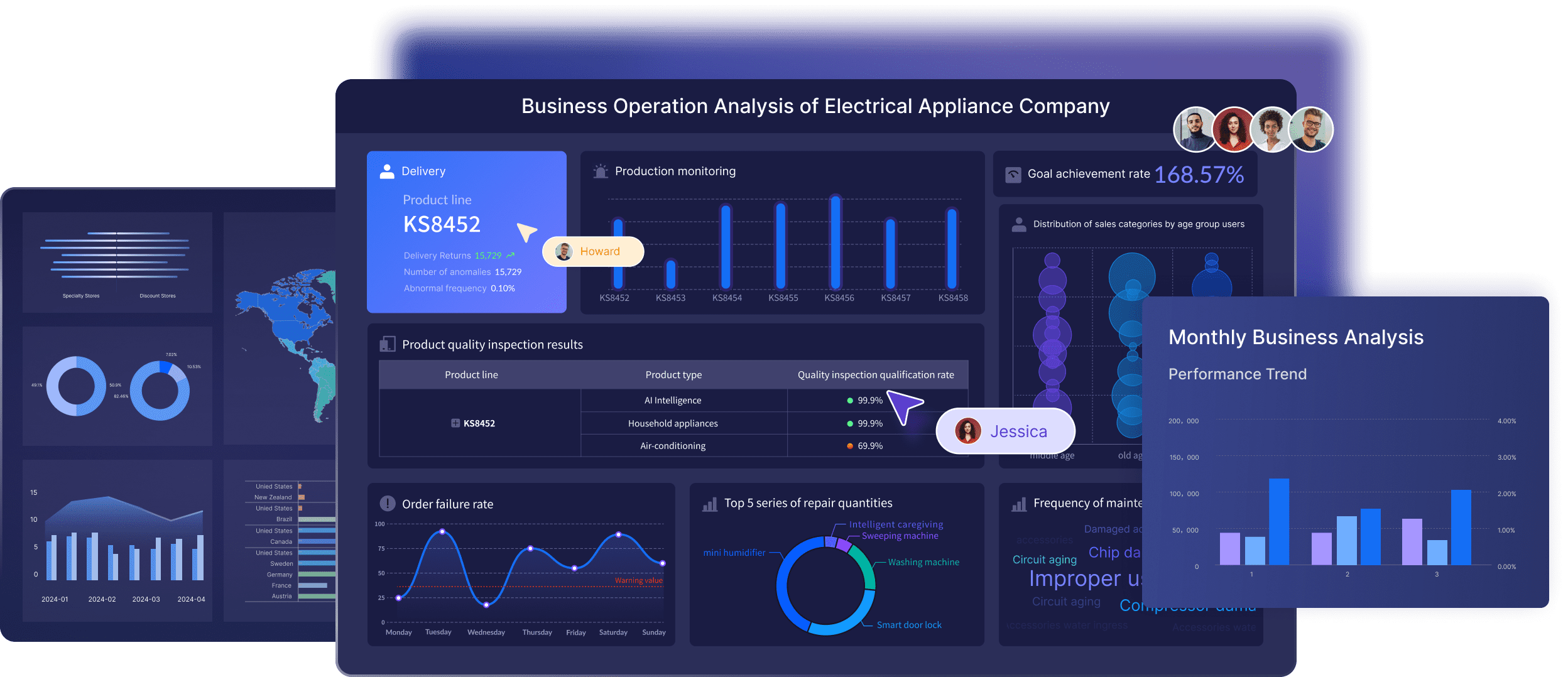

All dashboards in this article are created by FineBI

A strong engineering metrics dashboard should create a shared view of three things:

That shared view matters because enterprise delivery rarely breaks down from a single cause. Delays often sit between teams, approval layers, review queues, brittle pipelines, and inconsistent workflows. If leaders only look at raw output counts, they miss the system-level issues.

An effective dashboard turns disconnected engineering events into practical signals for:

It should also avoid the common trap of glorifying busywork. Commits, ticket counts, or PR volume alone do not explain engineering performance. Enterprise teams need context-rich trends that show where work slows, where quality degrades, and where teams are losing time.

Before selecting charts or integrations, define what business decisions the dashboard must support. This is where many enterprise reporting efforts fail. They start with available data instead of operational questions.

Each dashboard section should connect directly to an enterprise priority. For example:

This alignment keeps the engineering metrics dashboard useful at leadership level. It also prevents metrics sprawl, where dozens of charts exist but none answer a meaningful business question.

Separate executive needs from operational needs. Executives want a concise view of risk, trend direction, and cross-portfolio visibility. Managers and team leads need drill-down views that show where a bottleneck sits and what to change next.

A single dashboard should not try to satisfy every role with the same lens. Different stakeholders need different levels of abstraction.

Common audience layers include:

When one-size-fits-all reporting is forced on the organization, important context disappears. Teams end up debating fairness instead of improving systems.

Metrics should support coaching and process improvement, not individual surveillance. This is especially important in enterprise environments where dashboards can quickly become politicized.

Set clear guardrails from the start:

If teams do not share definitions, they will not trust trends. For example, “lead time” can mean request-to-production, first-commit-to-production, or PR-open-to-deploy depending on the organization. That ambiguity destroys dashboard credibility.

The best engineering metrics dashboard uses a balanced measurement model. It should capture speed, quality, and workflow health together so leaders do not optimize one dimension at the expense of another.

Delivery metrics help enterprise teams understand how smoothly work moves through the system.

Key delivery measures include:

These measures reveal where delivery is slowing. For example:

Quality metrics ensure delivery speed is not achieved by pushing risk downstream.

Core quality measures include:

These metrics should always sit alongside delivery metrics. If speed improves while failure rate and escaped defects rise, the system is not improving. It is just shifting cost to production support and customer experience.

Developer efficiency is about how effectively teams can move work through the system, not how much visible activity individuals generate.

Useful developer efficiency measures include:

These metrics are most powerful when paired with team feedback. If build time worsens and developers report reduced focus, the dashboard can support a business case for platform investment or process redesign.

To make the engineering metrics dashboard actionable, group measures into a small set of enterprise-friendly KPIs.

Operational KPIs

Strategic KPIs

Improvement KPIs

A practical rule for enterprise cadence:

A dashboard only works if it changes behavior. That means designing for decisions, not for display density.

Create role-based layers in the engineering metrics dashboard.

Recommended structure:

Executive summary view

Manager view

Team operational view

Comparisons should only be shown when context is truly comparable. A platform team supporting shared services should not be benchmarked the same way as a feature delivery squad with low operational burden.

The best enterprise dashboards are visually disciplined.

Use:

Avoid cluttered chart collections that force users to interpret too much at once. A few well-structured visuals outperform a wall of colorful but disconnected widgets.

Trust comes from data quality and operational ownership.

Pull data from stable enterprise systems such as:

Standardize these elements from the start:

If these foundations are missing, the engineering metrics dashboard becomes a recurring argument instead of a management tool.

Here is the practical rollout approach I would recommend in a large organization.

Do not begin with the entire engineering organization. Start with one business unit, product line, or value stream.

A good pilot has:

Use the pilot to validate:

This is where you learn which metrics look smart on paper but are not decision-useful in practice.

Once the pilot scope is clear, connect systems and lock metric logic.

For each metric, document:

Common enterprise issues to resolve early include:

This documentation is what separates a durable engineering metrics dashboard from a temporary reporting experiment.

Dashboard governance is as important as dashboard design.

Assign owners for:

Then embed the dashboard into recurring operating routines:

The key is to convert findings into actions. If a dashboard repeatedly shows review latency or test instability and nothing changes, users will stop caring.

Most enterprise dashboard failures come from misuse, not from tooling.

Avoid these common errors:

Strong teams treat the engineering metrics dashboard as a living operating system.

They improve it by:

In modern software organizations, the dashboard should help teams ask better questions:

Building an enterprise-grade engineering metrics dashboard manually is possible, but it is rarely efficient. You need data pipelines, semantic definitions, role-based views, refresh governance, and ongoing maintenance across multiple engineering systems. That complexity grows quickly as teams, tools, and reporting needs expand.

This is where FineBI becomes the practical choice.

Instead of stitching together custom dashboards from scratch, use FineBI to utilize ready-made templates and automate this entire workflow. FineBI helps enterprise teams unify engineering data, standardize KPI definitions, create role-based dashboard views, and maintain trusted reporting without excessive manual effort.

With FineBI, you can:

Utilize ready-made templates and automate this entire workflow with FineBI

Utilize ready-made templates and automate this entire workflow with FineBI

For organizations that want delivery, quality, and developer efficiency insights without building and maintaining everything by hand, FineBI is the faster path to a reliable engineering metrics dashboard.

A strong dashboard usually combines delivery, quality, and developer efficiency metrics. Common examples include lead time, cycle time, deployment frequency, change failure rate, mean time to restore, review time, CI delays, and work-in-progress trends.

Start by defining the business decisions the dashboard needs to support, then map those questions to a small set of trusted metrics. After that, connect data from tools like Git, CI/CD, issue tracking, and incident systems, and create role-based views for executives, managers, and team leads.

A healthy dashboard measures systems, workflows, and trends rather than judging individual engineers by raw activity counts. Its purpose is to reveal bottlenecks, reliability risks, and process friction so teams can improve together.

The most useful delivery metrics usually include lead time, cycle time, deployment frequency, throughput, and work-in-progress. These help teams see how fast work moves, where it stalls, and whether delivery is becoming more predictable over time.

Trust breaks down when data comes from disconnected tools, metric definitions are inconsistent, or exclusions are not documented. A dashboard becomes credible when teams agree on definitions, standardize sources, and clearly explain how each metric should be used.

The Author

Lewis Chou

Senior Data Analyst at FanRuan

Related Articles

What Is an Application Monitoring Dashboard? Beginner’s Guide to Metrics, Widgets, and Use Cases

An $1 is a single, actionable view of your app’s health, performance, availability, and user experience. For IT managers, operations leads, SREs, and developers, its business value is simple: it shortens the time between

Yida Yin

May 25, 2026

Dashboard Local Beginner Guide: CRM Features, Follow-Up Automation, and Real Use Cases

If you are exploring $1 for the first time, the main value is simple: it helps you stop losing track of people after meetings, referrals, networking events, and early sales conversations. For solo operators, small teams,

Yida Yin

May 25, 2026

React Dashboard Tutorial: Build a Real-Time KPI Workspace from a UI Template

A $1 is most valuable when it becomes a working command center, not just a pretty admin screen. In this tutorial, the scenario is straightforward: you need to deliver a real time KPI workspace for operators, managers, or

Yida Yin

May 21, 2026