An ETL tool is a platform that extracts data from multiple sources, transforms it into a usable format, and loads it into a warehouse, lake, application, or operational system for analytics and business workflows.

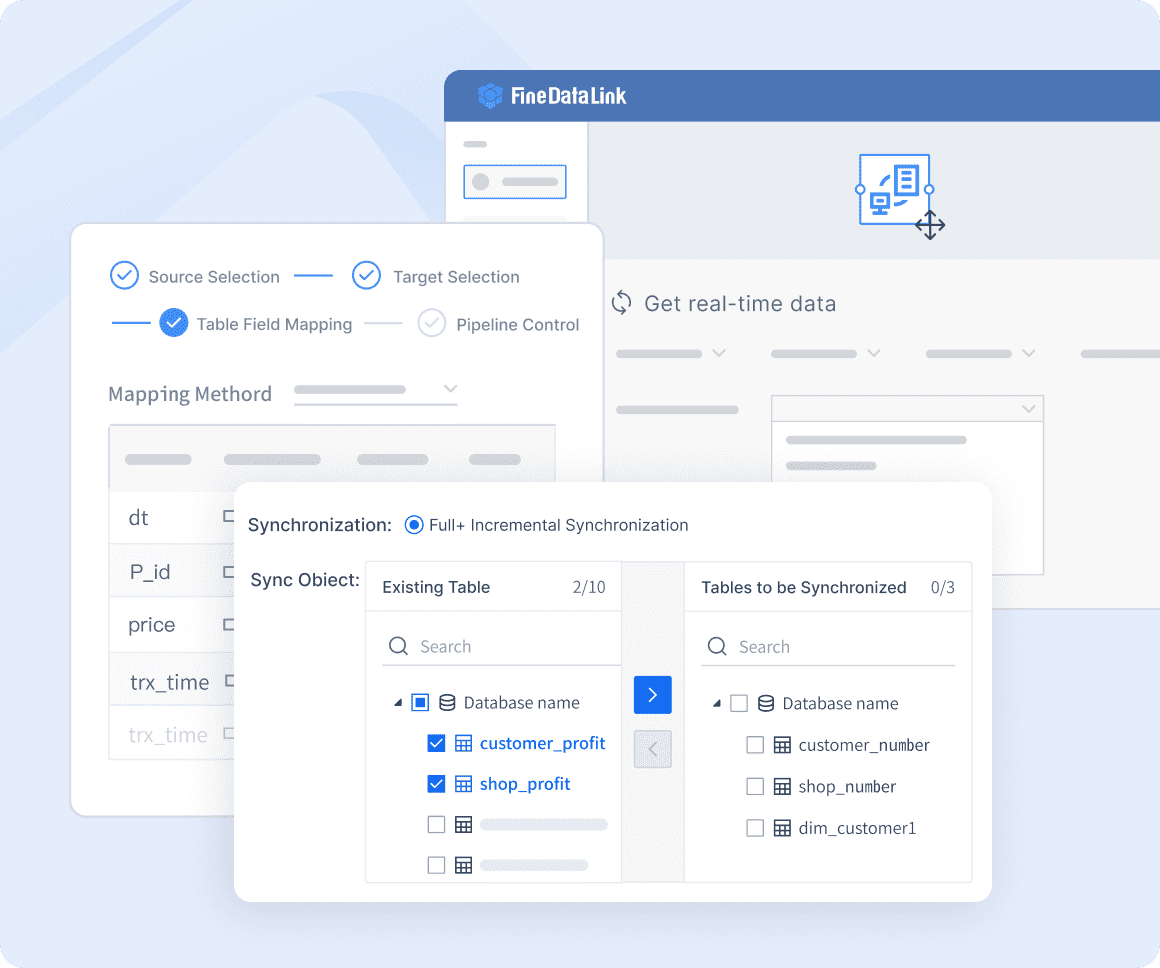

One-sentence overview: FineDataLink is a modern data integration and ETL platform built for teams that need fast pipeline deployment, real-time and batch synchronization, broad connectivity, and lower long-term maintenance effort.

Key Features:

Key Features:

Pros & Cons:

Best For (Target user/scenario): Mid-market and enterprise teams that want a flexible ETL tool with both batch and real-time capabilities without taking on heavy engineering overhead.

One-sentence overview: Fivetran is a managed ELT platform known for automated connectors, schema change handling, and low day-to-day maintenance.

Key Features:

Key Features:

Pros & Cons:

Best For (Target user/scenario): Data teams that want turnkey ingestion into cloud warehouses with minimal infrastructure work.

One-sentence overview: Hevo Data is a cloud-native ETL/ELT platform that emphasizes ease of use, no-code setup, and quick integration with common SaaS and database sources.

Key Features:

Key Features:

Pros & Cons:

Best For (Target user/scenario): Lean analytics teams that need to get data flowing quickly without dedicated platform engineering.

One-sentence overview: Stitch is a lightweight ETL service focused on straightforward data ingestion into warehouses for analytics use cases.

Key Features:

Key Features:

Pros & Cons:

Best For (Target user/scenario): Startups and small teams with relatively standard ingestion needs.

One-sentence overview: Airbyte is an open-source and commercial data movement platform that gives engineering teams strong extensibility and custom connector flexibility.

Key Features:

Key Features:

Pros & Cons:

Best For (Target user/scenario): Data engineering teams that need custom connectors or want greater platform control.

One-sentence overview: Matillion is a cloud-focused ETL/ELT platform designed for teams that want visual orchestration with deeper transformation control inside warehouse-centric architectures.

Key Features:

Key Features:

Pros & Cons:

Best For (Target user/scenario): Data teams that want more control over transformations without building every pipeline from scratch.

One-sentence overview: AWS Glue is a serverless ETL service for teams operating heavily within the AWS ecosystem and needing scalable batch or streaming data pipelines.

Key Features:

Key Features:

Pros & Cons:

Best For (Target user/scenario): AWS-centric organizations with in-house engineering capability.

One-sentence overview: Azure Data Factory is Microsoft’s managed data integration service for orchestrating ETL, ELT, and hybrid data movement across cloud and on-prem systems.

Key Features:

Pros & Cons:

Best For (Target user/scenario): Organizations standardized on Azure and Microsoft data services.

One-sentence overview: Apache NiFi is a flow-based data integration platform built for routing, transformation, and movement of data with fine-grained operational control.

Key Features:

Key Features:

Pros & Cons:

Best For (Target user/scenario): Technical teams managing internal data flows, streaming patterns, and infrastructure-heavy environments.

One-sentence overview: Informatica is an enterprise-grade ETL and data management platform known for governance, metadata, compliance, and large-scale integration programs.

Key Features:

Key Features:

Pros & Cons:

Best For (Target user/scenario): Large enterprises with strict compliance, governance, and multi-team data integration requirements.

One-sentence overview: Talend is a long-standing data integration platform that combines ETL, data quality, and governance for complex enterprise environments.

Key Features:

Key Features:

Pros & Cons:

Best For (Target user/scenario): Enterprises that need ETL plus data quality and hybrid deployment support.

One-sentence overview: IBM DataStage is an enterprise ETL platform built for large-scale transformation, governance, and integration in regulated environments.

Key Features:

Key Features:

Pros & Cons:

Best For (Target user/scenario): Large regulated organizations with established enterprise data programs.

One-sentence overview: Oracle Data Integrator is an enterprise data integration platform optimized for Oracle-heavy ecosystems and large-scale transformation workloads.

Key Features:

Key Features:

Pros & Cons:

Best For (Target user/scenario): Enterprises deeply invested in Oracle databases and applications.

One-sentence overview: Dataddo is a no-code ETL/ELT platform that aims to keep setup simple and pricing easier to understand for smaller teams.

Key Features:

Pros & Cons:

Best For (Target user/scenario): SMBs and lean analytics teams that need simple, business-friendly data movement.

One-sentence overview: Meltano is an open-source, code-first data integration platform built for teams that want Singer-based flexibility with modern workflow management.

Key Features:

Pros & Cons:

Best For (Target user/scenario): Small but technical teams that prioritize flexibility and open tooling over convenience.

Choosing from any etl tools list is easier when you evaluate platforms against your actual workloads instead of headline feature counts.

Start by defining your primary use case:

Next, separate must-have requirements into clear buckets:

Finally, decide how your team will weigh trade-offs between:

This is where many buyers misjudge tools. A platform that looks inexpensive at entry level may become costly through row-based pricing, premium connectors, or hidden maintenance work. Conversely, a platform with a higher subscription price may reduce engineering burden enough to lower total cost of ownership.

Connector coverage is often the first filter in an etl tools list, but raw connector count is not enough. A platform with 500 connectors is not automatically a better fit than one with 150 if the latter covers your core systems more reliably.

When comparing connector breadth, review support across:

Then go deeper into connector quality:

Tools such as Fivetran and Hevo prioritize managed connectors and simple setup. Platforms like Airbyte and Meltano offer more customization. FineDataLink stands out for teams that need both mainstream integration coverage and practical support for hybrid, real-time, and multi-destination scenarios.

ETL pricing is rarely simple. Buyers need to compare more than the starting monthly fee.

Common pricing models include:

To estimate total cost of ownership, account for:

For example:

A useful buying question is: What will this tool cost after 12 months of growth, not on day one?

Maintenance overhead is where tool economics often change.

Review:

Then estimate ongoing work tied to:

Fully managed tools reduce operational burden but may limit customization. Engineering-first and self-hosted tools offer flexibility but often shift support responsibilities onto your team. FineDataLink, Fivetran, and Hevo are often evaluated by teams trying to minimize maintenance, while Airbyte, Meltano, NiFi, and cloud-native services like Glue or ADF appeal to teams comfortable owning more of the stack.

Here is a practical way to compare the 15 tools by connector profile rather than marketing claims.

| Tool | SaaS Connectors | Database Connectors | Warehouse/Lake Support | API/Custom Source Flexibility | Streaming/Real-Time Support | General Connector Profile |

|---|---|---|---|---|---|---|

| FineDataLink | Strong | Strong | Strong | Good | Strong | Balanced for hybrid enterprise use |

| Fivetran | Very strong | Strong | Very strong | Moderate | Strong | Best-in-class managed coverage |

| Hevo Data | Strong | Strong | Strong | Moderate | Strong | Good cloud-native breadth |

| Stitch | Moderate | Strong | Strong | Limited | Moderate | Basic ingestion-focused coverage |

| Airbyte | Strong | Strong | Strong | Very strong | Moderate | Excellent for extensibility |

| Matillion | Moderate | Strong | Very strong | Moderate | Moderate | Strong for warehouse-centric stacks |

| AWS Glue | Moderate | Strong | Very strong in AWS | Strong | Strong | Best in AWS ecosystem |

| Azure Data Factory | Moderate | Strong | Strong in Azure | Strong | Moderate | Best in Microsoft environments |

| Apache NiFi | Limited | Strong | Strong | Very strong | Strong | Great for internal flow engineering |

| Informatica | Very strong | Very strong | Very strong | Strong | Strong | Enterprise-grade breadth |

| Talend | Strong | Very strong | Strong | Strong | Moderate | Broad hybrid support |

| IBM DataStage | Moderate | Very strong | Strong | Moderate | Moderate | Enterprise legacy + modern mix |

| Oracle Data Integrator | Moderate | Strong | Strong | Limited | Moderate | Best in Oracle-centric estates |

| Dataddo | Moderate | Moderate | Strong | Limited | Moderate | Lean business-focused coverage |

| Meltano | Variable | Strong | Strong | Very strong | Limited | Depends on engineering ownership |

Key takeaways:

Pricing fit often changes by company size and pipeline complexity.

Best options typically include:

These platforms fit smaller budgets or technical teams willing to trade convenience for lower software spend. However, usage-based pricing can become less favorable as data volumes grow.

Best options often include:

Mid-market buyers usually need a better balance between connector reliability, governance, and manageable maintenance. This is where a tool like FineDataLink can be attractive because it supports both fast implementation and more complex sync requirements without forcing an all-in enterprise platform purchase.

Best options usually include:

Large organizations care more about governance, deployment flexibility, auditability, and support models than about the lowest entry-level subscription.

A simple way to understand operational overhead is to group tools into three categories.

These tools are best when the priority is getting reliable pipelines live quickly and avoiding constant connector troubleshooting.

These platforms offer more flexibility or enterprise depth, but they usually require more active management, design discipline, and internal ownership.

These are better suited for organizations that value customization, platform control, or strict architecture alignment over convenience.

Vendor lock-in usually appears in three places:

Open and code-first tools reduce lock-in risk but increase ownership burden. Managed tools reduce effort but may make migration harder later.

Check:

This matters more than many buyers expect. Ask:

Strong evaluation signals include:

Your best choice depends on both your current stack and how mature your data practice is.

Use this simplified matching logic:

If your team is still early in its data maturity, prioritize:

If your team is mature and engineering-heavy, prioritize:

A strong proof of concept should not be a polished demo. It should test realistic workloads.

Use 3 to 5 representative scenarios:

During the proof of concept, score each tool on:

This is often where theoretical rankings change. A platform that looks ideal on paper may fail on one critical connector or become too expensive once realistic data volume is modeled.

Before buying, confirm the following:

If you need the shortest path to reliable data movement, managed platforms remain the safest option. If you need deeper customization, open and engineering-first tools still offer the most control. If you operate under strict governance or hybrid architecture constraints, enterprise platforms justify their complexity.

For many modern teams, the right choice is not the platform with the biggest connector count or the lowest advertised starting price. It is the one that matches your architecture, keeps maintenance predictable, and scales without forcing a full rebuild later.

Among this etl tools list, FineDataLink is especially worth shortlisting for teams that want a practical mix of connector coverage, real-time and batch integration, manageable ownership cost, and lower maintenance pressure. It is particularly well suited for organizations that need more than simple warehouse ingestion but do not want the operational burden of stitching together multiple tools.

If you are evaluating ETL platforms for 2026, build a shortlist around your real workloads, test failure scenarios early, and compare total ownership cost instead of feature counts alone. That is how you choose a platform that will still work for your team a year after implementation, not just during procurement.

Focus on connector coverage, pricing model, ease of setup, transformation depth, real-time or batch support, and expected maintenance effort. The best choice depends on your data sources, team skills, and whether you need simple ingestion or more advanced pipeline control.

ETL transforms data before loading it into the destination, while ELT loads data first and performs transformations inside the warehouse or lake. ELT is often preferred in cloud data stacks because it can scale with warehouse compute.

Managed ETL tools are usually faster to deploy and require less day-to-day maintenance, which makes them attractive for lean teams. Open-source platforms can offer more flexibility and custom connector options, but they often demand more engineering time and operational ownership.

Tools with CDC and streaming or near-real-time sync are typically the best fit for low-latency pipelines. Platforms such as FineDataLink, Hevo Data, Fivetran, and AWS Glue are commonly considered when teams need fresher data delivery.

ETL pricing varies widely by vendor and may be based on usage, rows processed, connectors, compute, or fixed subscriptions. Total cost should include not just license fees, but also engineering time, infrastructure, and ongoing maintenance.

The Author

Lewis Chou

Senior Data Analyst at FanRuan

Related Articles

9 Best No Code Integration Platform Tools for 2026: Which One Fits Your Workflow Best?

Choosing the right no code integration platform can make the difference between a smooth, scalable workflow and a patchwork of disconnected apps.

Saber Chen

Apr 27, 2026

10 Best Data Orchestration Tools for 2025 You Should Know

Compare the best data orchestration tools for 2025 to streamline workflows, boost automation, and improve data integration for your business.

Howard

Nov 28, 2025

10 Best Enterprise ETL Tools for Data Integration

Compare the 10 best enterprise ETL tools for data integration in 2025 to streamline workflows, boost analytics, and support scalable business growth.

Howard

Oct 02, 2025